Hey @harsh_tech,

I’ve had some time to think on this, and although having four ALBs makes your scenario slightly different from what I’ve done before, the configuration is pretty much the same.

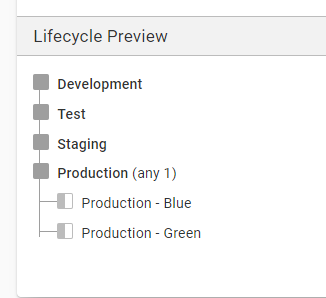

I’m assuming that you have the life cycle in Octopus as development, test, staging, and production.

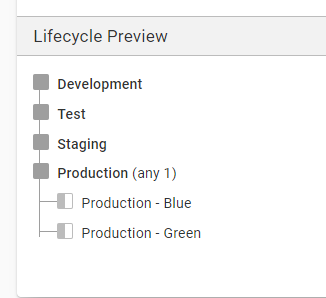

Firstly in Octopus, you will need to create another production environment in your lifecycle and call it production-blue rename the current production environment or create a new environment called production-green. Your Octopus lifecycle will look like development, test, staging, production-blue, and production green. The lifecycle in Octopus should allow you to deploy to either blue or green after staging.

You can check this out here in our samples.

I imagine the machines running in ALB1 & 2 are currently registered with Octopus to one environment, production? This will need to change, so those machines are registered to production-blue. Machines registered in ALB 4 & 5 need to be registered against production-green.

At this point, you have a bunch of machines running production workload under one environment, and machines running under the other production environment sat idol.

During deployment in Octopus, you can choose to deploy your new release to the environment that is sat idol with no traffic from users on it, knowing the new version won’t affect your current live service.

The next decision is how to move your user traffic from ALB 1 & 2 to ALB 4 & 5 when you have finished testing the new release.

You will need a script that instructs route 53 to point traffic to different load balances (and back for any problem and future deployments). This script can be included in an Octopus runbook and run manually, or it could be run at the end of every deployment process. At the end of the deployment to the empty environment, you could have a manual intervention step that pauses the deployment, and once approved, run the script swap route 53 to point traffic to the new environment.

You can check out a runbook we have here that changes AWS target groups to point to different EC2 instances.

Hopefully, this gives you a start but please ask any questions to help further!

Thanks,

Adam